The primary failure mode in modern generative video production isn’t resolution—it is motion drift. For creative operations leads, the challenge of integrating an AI Video Generator into a repeatable asset pipeline is rarely about getting a single beautiful frame. It is about whether the subject’s face morphs into a different person by second four, or if a camera pan causes the background architecture to melt like wax.

In a production environment, temporal consistency—the ability of a video to maintain its visual logic from the first frame to the last—is the metric that determines whether a tool is a toy or a professional asset. When we talk about motion control, we are essentially talking about the struggle to keep the latent space from wandering away from the intended narrative.

The Mechanics of Temporal Decoherence

To manage motion, one must understand why it fails. Most video models operate on a frame-prediction or a latent-diffusion-over-time architecture. When you prompt for movement, the AI is essentially guessing what the next iteration of pixels should look like based on a noise pattern. Without strict guidance, the model often prioritizes “cool-looking motion” over “consistent geometry.”

This manifests as “shimmering” or “ghosting,” where details like buttons on a shirt or the number of fingers on a hand fluctuate during a movement. From a benchmark perspective, we see a clear divide between models that use sparse attention mechanisms and those that use full temporal attention. The former is faster but prone to jitter; the latter is more stable but exponentially more expensive to compute. When evaluating any AI Video Generator, the first test should always be a slow, 360-degree rotation around a complex object. If the object’s structural integrity holds, the model has a strong temporal foundation.

Decoupling Camera Movement from Subject Motion

A common mistake in early AI video prompting is the “kitchen sink” approach, where camera instructions and subject actions are mashed together in a single sentence. This often results in the model getting confused about which pixels should be moving in relation to the frame.

In a professional workflow, operators should attempt to decouple these variables.

Camera movement—pans, tilts, zooms, and dollies—should be treated as the “container” for the scene. Subject motion—walking, talking, or gesturing—is the “content.” If you tell an AI to “have a man run while the camera zooms in,” you are asking the model to calculate two different types of depth changes simultaneously. Often, the current AI Video Generator ecosystem handles this by flattening one of the movements, making the man appear to run in place or making the zoom feel like a digital crop rather than an optical shift.

To gain better control, technical operators often use “motion weights” or specific directional tokens. For example, using terms like “static tripod” or “fixed focal length” can actually help the model focus its computational budget on the subject’s movement rather than trying to reinvent the background every 24 frames.

The Limitation of Physical Logic

It is important to acknowledge a significant limitation in the current state of the art: these models do not actually “understand” physics. They are probability engines. When a ball hits a wall in an AI-generated clip, the model doesn’t calculate the bounce based on velocity and mass. It simply knows that, historically, in its training data, a round object near a flat surface often changes direction.

This lack of a physics engine leads to what we call “non-Newtonian artifacts.” You might see a person walk through a solid table or a car turn a corner while its wheels remain stationary. There is an inherent uncertainty here; no matter how precise your prompt is, the model may still produce a “jelly-like” movement where objects stretch unnaturally. Expecting 100% physical accuracy is a recipe for frustration in the current generation of tools. We are still in an era where curation is as important as generation.

Prompting for Dynamic Range without Losing the Plot

When scaling an AI Video Generator workflow, the goal is to create a library of reliable “motion primitives.” These are prompt structures that have been benchmarked to produce consistent results across different seeds.

Instead of using subjective adjectives like “fast” or “energetic,” operators should use technical terminology that mimics cinematography. “High shutter speed” can reduce motion blur artifacts. “Low-angle tracking shot” provides a geometric anchor for the model to follow. By using the language of film, you are tapping into a specific subset of the model’s training data that is likely higher quality and more consistent than generic social media footage.

The Role of Image-to-Video (I2V) in Consistency

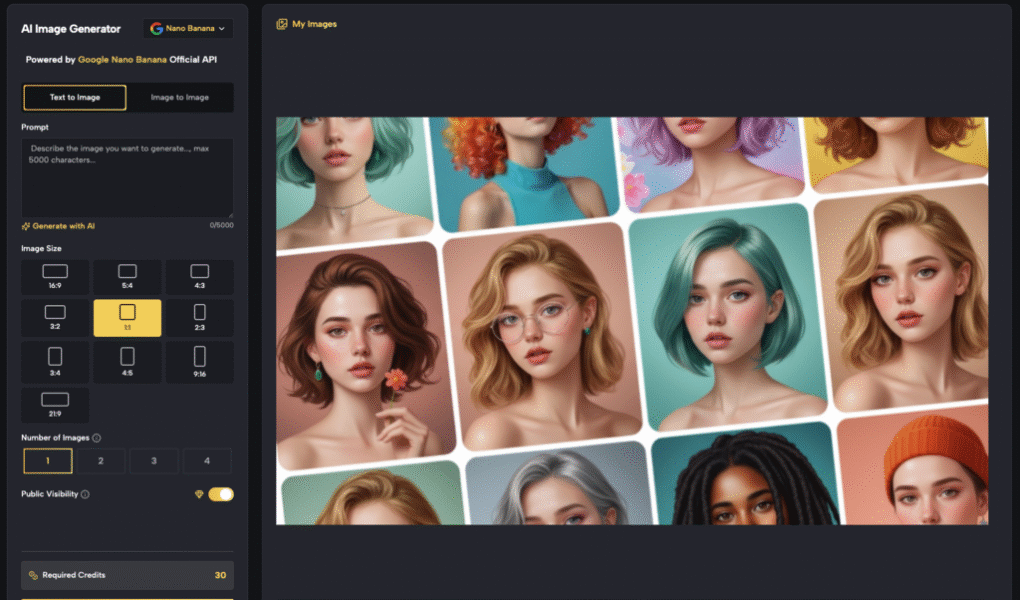

One of the most effective ways to bypass the “drift” of text-to-video is to start with a high-fidelity image. This provides a “ground truth” for the AI. By using a tool like the MakeShot image generator to create a baseline frame, and then feeding that into the video pipeline, you lock in the character design, the lighting, and the environmental details.

The motion then becomes an extension of an existing structure rather than an attempt to manifest a structure from thin air. This is the preferred method for brand-sensitive work where the product must look identical in every shot. Even then, there is a “temporal wall”—usually around the 4-to-6 second mark—where the model’s memory of that original image begins to fade, and the drift starts to take over.

Benchmarking Motion Fluidity vs. Narrative Coherence

There is often a trade-off between how “fluid” a video looks and how well it follows a narrative. High-motion models, such as Kling or Sora-class architectures, can produce incredibly cinematic sweeps, but they often struggle with specific, multi-step instructions.

For instance, asking a character to “pick up a cup, take a sip, and set it down” is a complex narrative chain. In most cases, the AI will fail at the points of contact. The hand might merge with the cup, or the cup might disappear into the face.

We have to reset expectations regarding “one-shot” generation. In a professional pipeline, these complex actions are often broken down into three separate clips and joined in post-production. The “operator” isn’t someone who just types a prompt; they are the person who understands how to storyboard around the AI’s current inability to handle complex object permanence.

The Uncertainty of Multi-Object Interaction

A second major limitation occurs when multiple dynamic subjects are introduced to a scene. If you have two people dancing, the model has to track the limb positions of both individuals while also calculating their spatial relationship to one another.

The “collision detection” in generative video is notoriously poor. More often than not, the two figures will eventually blend into a single, multi-limbed entity. For creative leads, this means that “crowd shots” or complex interactions are currently high-risk, low-reward endeavors. It is usually more efficient to generate individual subjects against a clean background and composite them later using traditional VFX techniques.

Managing the Production Pipeline

Integrating these tools requires a shift in how we think about “unit economics” in creative production. The cost of a generative video isn’t just the subscription fee; it is the human hours spent on “fishing” for a usable take.

A benchmark-driven approach involves:

- Seed Stress-Testing: Running the same prompt across 20 different seeds to identify the “stability rate.”

- A/B Testing Models: Using different backends (like Veo vs. Nano Banana) to see which handles specific types of motion better.

- Post-Generation Stabilization: Using third-party tools to lock down “micro-jitters” that the AI Video Generator might leave behind.

Operators who succeed are those who treat the AI as a source of “raw material” rather than a finished product. They look for the 2 seconds of perfect motion within a 5-second clip and discard the rest.

Future Trajectories: From Pixels to Vectors?

As we look toward the next iteration of video tools, the trend is moving away from “pure” diffusion toward more hybrid models that incorporate 3D aware-layers. This would allow an operator to define a 3D path for a camera to follow, ensuring that the perspective change is mathematically correct rather than just visually estimated.

Until those tools become mainstream, the best defense against motion drift is a combination of technical prompting, starting from a static image, and a healthy skepticism of the model’s ability to maintain logic over long durations. The goal isn’t to eliminate drift entirely—that’s currently impossible—but to manage it so that it stays within the margins of professional acceptability.

The “pro” in “pro-grade AI video” doesn’t come from the tool itself, but from the operator’s ability to navigate these limitations. We are moving from an era of “prompting” to an era of “steering,” where the value lies in knowing exactly where the AI is likely to break and building a workflow that accommodates those cracks.